|

Notice that three bootstrap samples have been created from the training dataset above. Then, multiple decision trees are created, and each tree is trained on a different data sample: However, since we usually only have one training dataset in most real-world scenarios, a statistical technique called bootstrap is used to sample the dataset with replacement. If we had more than one training dataset, we could train multiple decision trees on each dataset and average the results.

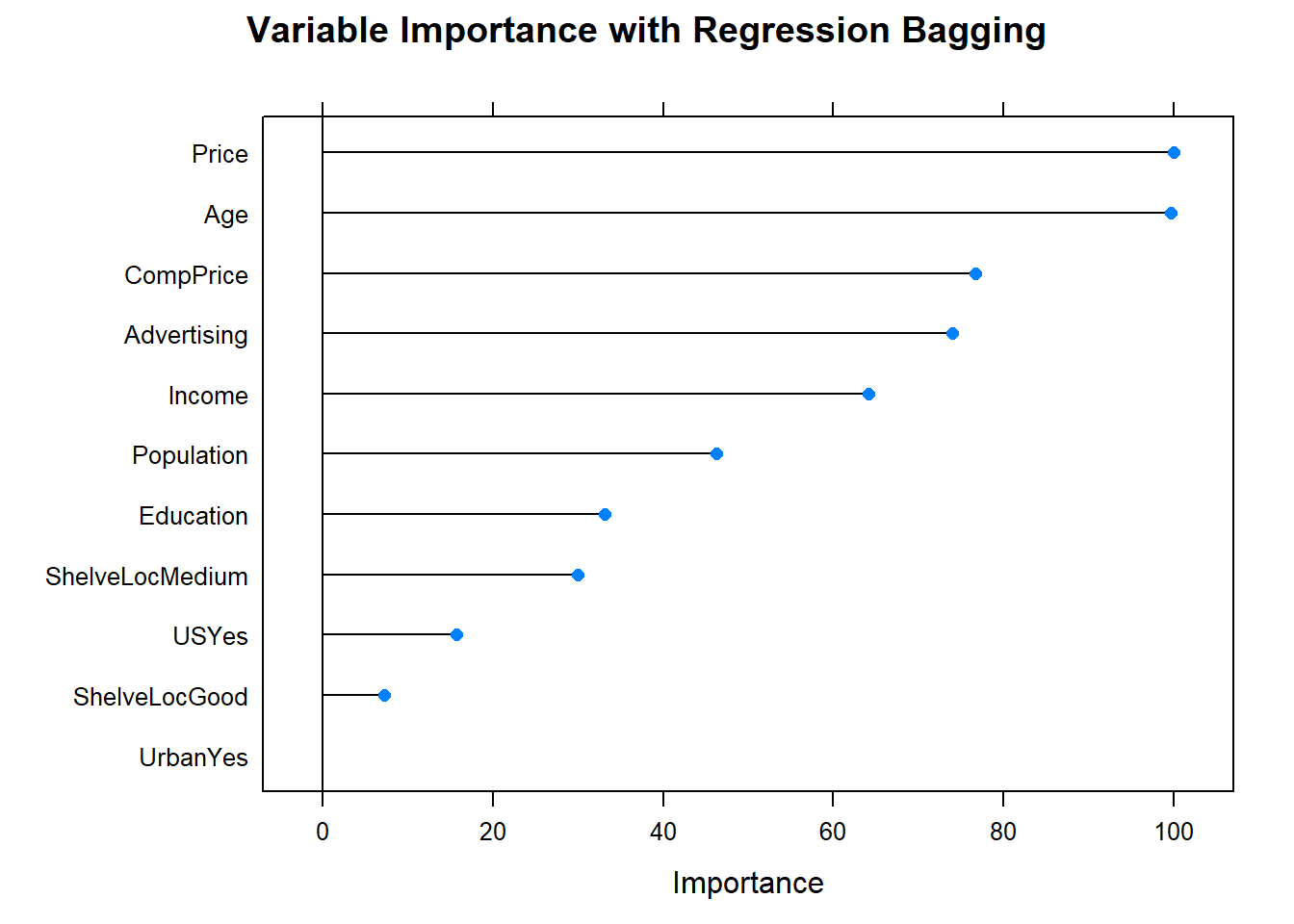

It works by averaging a set of observations to reduce variance. This is done using an extension of a technique called bagging, or bootstrap aggregation.īagging is a procedure that is applied to reduce the variance of machine learning models. The random forest algorithm solves the above challenge by combining the predictions made by multiple decision trees and returning a single output. How does the random forest algorithm work? A model like this will have high training accuracy but will not generalize well to other datasets. This means that the model is overly complex and has high variance. One of the biggest drawbacks of the decision tree algorithm is that it is prone to overfitting. Even a small change in the training dataset can make a huge difference in the logic of decision trees.Decision trees are prone to overfitting.They can partition data that isn’t linearly separable.They can be used for classification and regression problems.Decision trees are simple and easy to interpret.Now that you understand how decision trees work, let’s take a look at some advantages and disadvantages of the algorithm. Pros and Cons of the Decision Tree Algorithm: Branches: Branches connect one node to another and are used to represent the outcome of a test.Leaf nodes: Finally, these are nodes at the bottom of the decision tree after which no further splits are possible.Internal nodes: These are the nodes that split the data after the root node.The root node is the feature that provides us with the best split of data. Root node: A root node is at the top of the decision tree, and is the variable from which the dataset starts dividing.If you’d like to learn more about how to calculate information gain and use it to build the best possible decision tree, you can watch this YouTube video. The best tree is one with the highest information gain. Information gain is a metric that tells us the best possible tree that can be constructed to minimize entropy. The tree needs to find a feature to split on first, second, third, etc. Information gain measures the reduction in entropy when building a decision tree.ĭecision trees can be constructed in a number of different ways. Watch this YouTube video to learn more about how entropy is calculated and how it is used in decision trees. In this case, since the variable “Temperature” had a lower entropy value than “Wind”, this was the first split of the tree. The entropy value of this split is 0, and the decision tree will stop splitting on this node.ĭecision trees will always select the feature with the lowest entropy to be the first node. This is because when the temperature is extreme, the outcome of “Play?” is always “No.” This means that we have a pure split with 100% of data points in a single class. In the decision tree above, recall the tree had stopped splitting when the temperature was extreme: A value of 0 indicates a pure split and a value of 1 indicates an impure split. It determines how the decision tree chooses to partition data. What is the order used to construct a decision tree?Įntropy is a metric that measures the impurity of a split in a decision tree.How does a decision tree decide on the first variable to split on?.These observations lead to the following questions: It is only if the temperature is mild that it starts to split on the second variable. It also stops splitting when the temperature is extreme, and says that we should not go out to play. The example above is one that is simple, but encapsulates exactly how a decision tree splits on different data points until an outcome is obtained.įrom the visualization above, notice that the decision tree splits on the variable “Temperature” first. Let’s build a decision tree using this data: Depending on the temperature and wind on any given day, the outcome is binary - either to go out and play or stay home. This dataset consists of only four variables?-?“Day”, ‘‘Temperature’’, ‘‘Wind’’, and ‘‘Play?’’. How does the decision tree algorithm work?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed